It’s been two weeks since Google announced to change the reporting of keyword Quality Scores in AdWords. The stated intention was to provide more transparency about those scores – a traditional problem for Google. Failing at that, the announcement has lead to some confusion among marketers. Let’s look at what really happened.

To better understand keyword Quality Scores, we closely track a large number of keywords across our clients’ accounts at Bloofusion. Since our data suggests that the update has already occurred, it’s time to look at the changes.

The Update

About a week after the announcement, on August 2nd, we observed a lot of Quality Score changes over a period of 13 hours. Those changes happend simultaneously across several accounts. In the end, about half of our keywords had been assigned a new Quality Score.

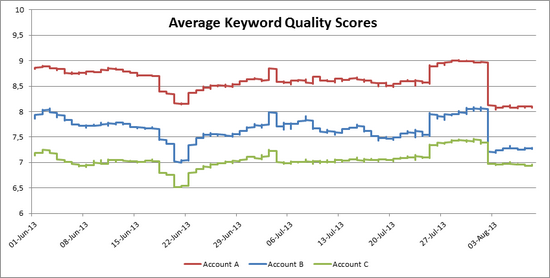

To see the overall change, take a look at this chart:

The effect of the latest update is clearly visible on the right side, where all three accounts experience a sudden drop of their average Quality Score. However dramatic this looks, Google announced that the change would only affect visible Quality Scores, so there’s no need to be alarmed.

What’s interesting here is that there are similarities in the curves, even though the accounts are from different advertisers. There’s no good reason for everyone’s Quality Scores dropping temporarily in June or temporarily rising two weeks later. Even the drop after the announced update was preceeded by a (smaller) jump a few days earlier. This suggests that Google has been tweaking Quality Score reporting even before the announcement. This also suggests that changes in keyword Quality Scores aren’t necessarily connected to anything that happens in an account.

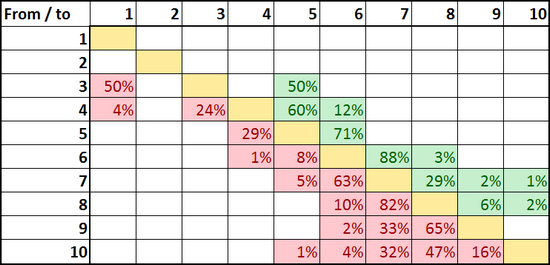

We also looked at the migration of Quality Scores during the update:

(Read: When a Quality Score of 3 changed, in 50% of all cases it became a 1 and in 50% of all cases it became a 5)

As you can see, most of the changes were small; just one or two points up or down. The update changed about half of our Quality Scores, but it didn’t turn our accounts upside down.

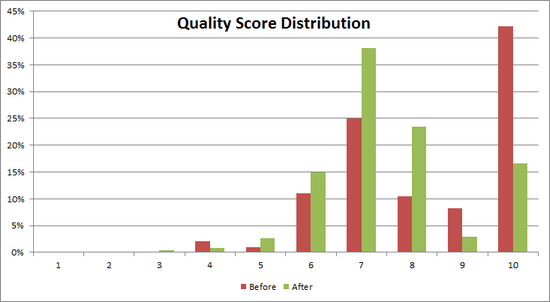

Still, the overall distribution of Quality Scores clearly changed:

Before the change, there were 42% tens in the accounts. Now their share is at 17%, while sevens and eights are much more common.

What did Google do?

When I first read Google’s announcement I realized that it doesn’t clearly say what the update was about. In the first paragraph they write:

Under the hood, this reporting update will tie your 1-10 numeric Quality Score more closely to its three key sub factors — expected clickthrough rate, ad relevance, and landing page experience.

And in the second paragraph:

We’re making this change so that the Quality Score in your reports more closely reflects the factors that influence the visibility and expected performance of your ads.

It’s possible to interpret this like Google wanted to better align keyword Quality Score with auction Quality Score (the thing that actually matters when it comes to position and click prices). Judging from what I heard so far, this seems to be the common interpretation.

However, in the ad auction Quality Score is all about click-through probability, a. k. a. expected click-through rate. Everything else, like ad relevance, is just a way to narrow down click-through probability until there is enough historical data. Since only one of the “three key sub factors” really matters, tying keyword Quality Score to all three of them isn’t going to bring it closer to the actual auction Quality Score. So even though the industry has already accepted the interpretation of Google giving us a more meaningful Quality Score, that’s not what happend.

What really happend

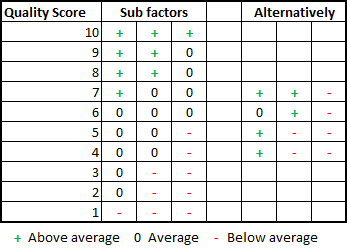

Instead what Google did was very simple – they did exactly what they wrote first: They tied keyword Quality Scores more closely to the three sub factors. Not the three sub factors as they use them in the ad auction, of course. They’ve tied keyword Quality Score to the three sub factors they report in the interface, like here:

Maybe you’ve read this article from Brad Geddes a year ago. In the article, he goes through several examples of how the reported Quality Scores and sub factors didn’t fit very well. For example, when everything is average or above average and the reported score is still just a four. He concluded that in many cases this didn’t make much sense and raised more questions than it answered. And that’s exactly the issue that this update addressed.

Looking at the QS 10 keywords in our accounts, the three sub factors are all above average – everywhere I look. With QS 1 it’s the opposite: Everything is below average. Overall I found a pretty strong connection between the three sub factors and Quality Scores. It looks like this:

So for example, if a keyword has one factor above average and the other two are average, it’s a seven. Or, if there are two above and one below average, that’s a seven, too. I found a few examples that were different, but in the vast majority the above is true for active keywords. By the way, if everything is average, it’s a six, meaning six should be regarded the “average” Quality Score from now on.

Consequences

The update ties visible Quality Scores to the three sub factors equally, even though their importance for auction Quality Score is far from equal. This means that keyword Quality Score is by no means a substitute KPI for auction Quality Score. Instead, it now reflects its three components and doesn’t seem to hold any meaning beyond that.

An especially big problem is that a third of visible Quality Score is now made up of Google’s evaluation of the landing page. This factor doesn’t play a big role in the actual ad auction (read more on this here) and it’s very error-prone since it’s hard to evaluate landing page algorithmically. This may well lead to a renaissance of the myth that keywords are important to have on landing pages.

For most marketers this is hopefully just another sign of how little keyword Quality Score actually matters. It can still be used to find problems, but since this update further removes keyword Quality Score from the actual auction Quality Score, its usefulness is fading.

tl;dr

Visible Quality Score is now nothing more than expected CTR + ad relevance + landing page experience. Don’t put too much faith into it. Instead, look at this meme:

(cross posted from our German blog)

Martin Roettgerding is the head of SEM at SEO/SEM agency Bloofusion Germany. You can find him on LinkedIn.

Great Post Martin!

We also saw some fluctuations in QS past weeks, due to the update. Most curious is the ‘expected CTR’. This is maybe a response to a (old) post: http://www.epiphanysearch.co.uk/blog/decoding-the-quality-score-2/. In our checks it also really looks like Google has expectations for a CTR in correlation with a keyword and the position. I’m curious in the real impact. To be continued I guess.

Excellent analysis Martin. I agreed with you 100% until your last line:

‘Don’t put too much faith into it.’

I’m a big believer in the power of quality score to lower CPA, and thus CPC.

I mean Larry Kim just blogged about the power of QS to reduce CPA & CPC:

http://www.wordstream.com/blog/ws/2013/07/16/quality-score-cost-per-conversion

There’s certainly a correlation between higher keyword (!) QS and lower CPA. But that doesn’t mean that the number means much for the individual keyword. There’s a reason analyses like this are done at a very high level: there’s nothing to be seen on a small scale. If the number changes from 5 to 7 it won’t change your CPC, or ad position, or CPA.

Also note that this latest update explicitly changes the nature of keyword Quality Scores and connects them to the three factors. This means that the new QS is even further apart from the real thing than the old one.

I’ve always said don’t put too much faith into keyword (!) Quality Score. But now I’d say it’s even less useful.

Excellent article, Martin.

Since the AdWords model is an auction, provided all QS are similarly affected across all advertisiers, the net effect should be nil. I know that we all fixate a little on getting the 10/10 – but if everyone else can only get a 7/10, then our 8/10 is ahead of the game…. or, alternatively, if I had a 10 and you had a 9 – and now I only have a 7, but you have a 6…. well, you get my point!

Quality Score is puzzle that Google will not explain to us, because if we can hack the quality score there will be unfair competition in the ads auction, so let it be a puzzle.

Cheers